Why Agentic AI Will Fail Without the One Thing It Cannot See

There’s a dirty secret in every enterprise on the planet, and everyone who works in one knows it. The documentation is wrong.

Not wrong like a typo. Wrong like a map that’s missing 30 to 50 percent of the territory. Wrong in the way that matters most: the procedures manual says one thing, and the people who actually run the place do something quite different. They’ve always done something different, because the real world is messier than any document can capture, and humans are brilliant at adapting to mess.

After spending over 20 years in technical documentation, I’ve seen this as the norm. Even with the tremendous time and effort it took to create user manuals and developer guides, we knew that the documents were 99% of the time left to collect dust on the shelves.

This has been true since the first org chart was drawn on a napkin. It wasn’t a crisis. It was just the way things worked. The gap between what the documentation said and what people actually did was filled by experience, intuition, sticky notes, whispered hallway conversations, and that one person in accounting who somehow knew how to make the quarterly close actually close. We called it “tribal knowledge” when we bothered to name it at all.

For fifty years, nobody needed to fix this because humans were always in the loop. They were the loop. The documentation was a rough sketch, and people colored it in every day with contextual judgment, improvised workarounds, and the kind of hard-won expertise that takes years to build and seconds to lose when someone walks out the door.

And then we invited the machines to the table.

The Moment Everything Changed

Goldman Sachs recently revealed they’ve embedded engineers from Anthropic (the creators of the Claude AI) directly into their teams to build autonomous agents that handle transaction accounting and KYC compliance. Work that previously required armies of junior accountants and compliance officers is now performed by AI.

The financial press called it the “SaaSpocalypse.” Software stocks cratered. Nearly $300 billion in market value evaporated in 48 hours as investors realized that if AI agents are the primary users of enterprise software, the entire seat-license business model is dead.

But the real story isn’t about software stocks. The real story is what those AI agents are not being told.

Goldman’s agents are executing complex, rules-based labor using documented procedures. They’re reading the manual. And as anyone who’s worked in compliance knows, the manual is a polite fiction. The real work of KYC is knowing that a particular wire transfer is fine because the client is mid-merger, sensing that a flagged transaction pattern is actually routine for that industry segment, understanding which escalation paths actually work at 4:47 PM on a Friday. None of that is in the documentation.

It lives in the heads of the people who’ve been doing the work for years. It is the human layer.

Right now, Goldman has a human-in-the-loop model: one person auditing the work of ten AI agents. The narrative is that this is responsible AI deployment. The human provides contextual judgment. The agent provides speed and scale. Beautiful partnership.

Except it’s not a partnership. It’s a countdown.

The Compression Cycle

Here’s what the celebratory press releases don’t say. The economic logic that drove the ratio from one human per task to one human per ten agents doesn’t stop at 1:10. It can’t. The CFO who greenlit the first wave of automation isn’t going to look at a 90% labor reduction and say, “That’s enough.” Competitors will push to 1:25. Then 1:50. The surviving humans won’t be auditing the agents’ work in any meaningful sense. They’ll be scanning dashboards for red flags that the agents themselves defined as red flags.

This is how the human-in-the-loop goes from safety net to security theater. Not because anyone made a cynical decision to cut corners, but because the math of compression makes meaningful oversight impossible. You cannot bring deep contextual judgment to 500 flagged items per day. Cognitive science is unambiguous on this point. You become a rubber stamp with a pulse.

And here’s the part that should terrify every board member in a regulated industry: as you compress the human layer, you’re not just reducing headcount. You’re destroying knowledge. The 90 compliance analysts who were let go didn’t just take their labor capacity. They took their enacted understanding of how compliance actually works at that specific organization. The informal risk signals. The unwritten escalation rules. The contextual pattern recognition built over decades of combined experience. Gone.

The remaining humans aren’t doing the work anymore. They’re monitoring. And monitoring a process you no longer practice is a fundamentally different cognitive activity than performing the process itself. Your expertise atrophies precisely when the system needs it most.

This is not a theoretical risk. This is the documented pattern of every automation wave in industrial history. The humans retained as “oversight” get progressively compressed until their role is ceremonial. And then something breaks, and there’s nobody left who understands why.

The Conspiracy of Silence

I’ve spent over fifteen years in enterprise transformation. IBM, Deloitte, Accenture, and a company called MAKE Technologies that specialized in legacy system migrations. I’ve watched billion-dollar modernization projects fail. Not once, not occasionally, but routinely. The industry failure rate for enterprise modernization is 70 to 80 percent. Let that number settle in. Seven or eight out of every ten transformation projects fail to deliver their expected value.

Why? Not because of technology. The technology works fine. They fail because the new system was built on documentation that was already incomplete. The architects read the procedures manual, the requirements spec, the process flows. They built exactly what the documents described. And then the organization tried to use it and discovered that the documents had been capturing maybe half of how the work actually got done.

Everybody knows this. The consultants know it. The project managers know it. The executives who approve the budgets know it. The practitioners who actually run the business definitely know it. They’ve been working around the documentation’s gaps for years. But nobody says it out loud because saying it out loud would mean admitting that the $200 million transformation is being built on a foundation of sand. Cognitive dissonance at industry scale.

I call this the conspiracy of silence. It’s not a conspiracy of malice. It’s a conspiracy of despair. Everyone knows the documentation is incomplete. Nobody has a viable way to fix it. So everyone pretends, the project launches, reality reasserts itself, and the failure gets attributed to “change management issues” or “scope creep” or some other euphemism that avoids the actual diagnosis: we tried to automate a system we didn’t understand.

Now multiply that dynamic by the speed and scale of agentic AI deployment and you begin to see the shape of the crisis. We’re not just building the next software system on incomplete documentation. We’re building autonomous decision-making agents on incomplete documentation. Agents that execute at machine speed with no contextual judgment, no gut feeling that something’s off, no ability to sense when the rules they’re following have diverged from the reality they’re operating in.

The machines never ask. That’s the architectural flaw at the heart of the entire agentic enterprise movement. AI systems assume completeness based on whatever documentation they were trained on. They don’t pause and say, “Hey, this procedure seems incomplete. Can I talk to someone who actually does this work?” They just execute. Confidently. At scale. And wrongly, 30 to 50 percent of the time, in ways that accumulate silently until a regulator or a market event exposes the drift.

What the Machines Cannot See

There’s a deeper philosophical point here that matters, and it connects to everything I’ve been writing about the relationship between natural intelligence and artificial intelligence.

AI is pattern matching at extraordinary scale. It is genuinely remarkable at what it does. But what it does is not what humans do. Humans don’t just follow rules. We sense when rules are wrong. We feel discomfort before we can articulate why. We read rooms. We detect political dynamics. We sense when a client is nervous about something they haven’t said. We carry what I think of as primal intelligence, a form of knowing that operates below the threshold of documentation, below language itself.

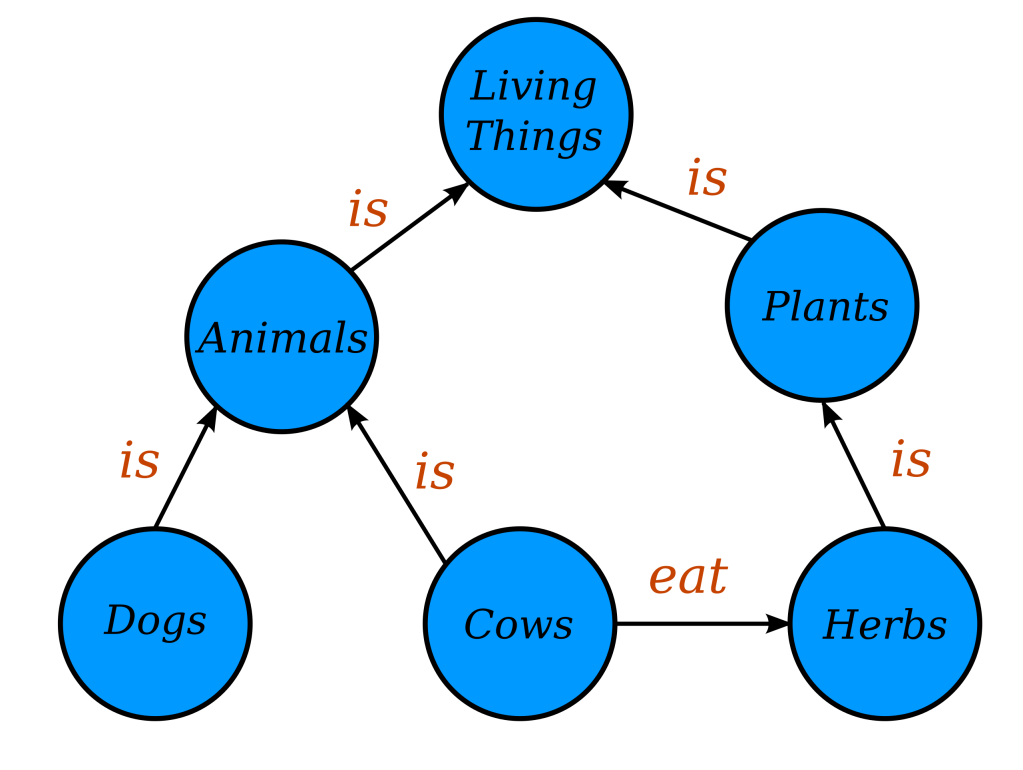

This isn’t mysticism. It’s the operational reality of how organizations function. The expert practitioner who “just knows” that a particular process needs to be handled differently on the last day of the quarter isn’t following a written rule. They’re drawing on years of embodied experience: pattern recognition built through repetition, failure, correction, and the slow accumulation of contextual understanding. It’s what the philosopher Michael Polanyi meant when he observed that “we know more than we can tell.”

For fifty years, computer science focused on making machines coherent with each other. Database schemas. API standards. Integration protocols. System interoperability. We got extraordinarily good at making machines talk to machines. What we never did, what we never even attempted, was make machines coherent with human reality. With the actual, messy, adaptive, context-dependent way that organizations function beneath the documented surface.

And now we’re deploying autonomous agents into that gap and wondering why they fail.

The Building Is on Fire

Here’s the timeline that keeps me up at night.

The Silver Tsunami, the mass retirement of experienced Baby Boomers, is accelerating. In critical industries like energy, utilities, manufacturing, and financial services, the people who carry the deepest organizational knowledge are walking out the door. Not in ten years. Now. Today. Every Friday afternoon, another retirement party, another decades-deep well of enacted knowledge gone forever.

At the same time, agentic AI deployment is scaling exponentially. Gartner predicts that by 2028, 33% of enterprise software applications will include agentic AI, up from less than 1% in 2024. They also predict, less prominently, that 40% of those agents will be decommissioned by 2027 because they can’t function in real organizational contexts.

These two curves, knowledge leaving and agents arriving, are crossing right now. And the window to capture the human layer before it’s gone is closing. You cannot reconstruct enacted organizational knowledge from documentation after the practitioners have left. You can’t interview someone who’s retired to Florida. You can’t reverse-engineer twenty years of contextual judgment from a procedures manual that was already incomplete.

This is not a slow-burn strategic concern. This is a building on fire. The organizations that capture their human layer now, while the people who carry it are still in the building, will have a foundation for the agentic future. The organizations that don’t will discover, painfully and expensively, that their AI agents are operating on a map that’s missing half the territory.

The Roles That Emerge

I want to end on something that matters as much to me as any business case.

There’s a prevailing narrative that AI eliminates humans from the enterprise. That narrative is wrong, but not in the reassuring way most people mean when they say it’s wrong. The human-in-the-loop as currently conceived, the person monitoring the agents’ outputs, is indeed a transitional role that economic pressure will compress to near-zero. If your value proposition is “I review what the AI did,” your days are numbered.

But something else emerges from the rubble of the old model, and it’s genuinely new.

When you make the human layer visible, when you actually capture the enacted reality of how an organization functions, including the tacit knowledge, the workarounds, the cognitive improvisation, you don’t eliminate the need for humans. You change what humans do.

Organizational Cartographers. People who conduct ongoing conversations with practitioners to surface and map the enacted reality as it evolves. Not business analysts filling out templates. Skilled interlocutors who combine ethnographic sensitivity with systems thinking to draw the territory, not just the map.

Coherence Stewards. People who monitor the living delta between what the organizational model says and what the organization is actually doing. When a team develops a new workaround in response to a regulatory change, someone needs to recognize that as valuable adaptation rather than deviation. This role gets more important as agents scale, not less.

Semantic Mediators. People who facilitate resolution when the organizational reality reveals contradictions between how different departments enact the same process. These are judgment calls about values and priorities that agents structurally cannot make.

These aren’t bullshit jobs invented to make humans feel needed. They’re roles that emerge from a genuine architectural requirement: organizational reality is a living system, not a static deposit. Markets shift. Regulations change. New products create new edge cases. The enacted system that you capture today is already drifting by next quarter, not because anyone made an error, but because adaptation to changing reality is what humans do. That’s why the gap exists in the first place. It’s not a bug. It’s the mechanism by which organizations stay alive.

The Choice

We are at a crossroads that most people in enterprise technology haven’t fully grasped yet.

One path leads to the current model, accelerated. Agents trained on documentation. Humans progressively compressed out. Knowledge atrophying silently. Drift accumulating until something breaks. In this model, humans are a cost to be minimized. The human layer, the enacted reality, the primal intelligence, the contextual judgment, is treated as an inconvenient residue of the pre-AI era, something to be automated away rather than valued.

The other path recognizes that the human layer isn’t a problem to be solved. It’s the source material for organizational intelligence. In this model, you capture the human layer as infrastructure, living, evolving, continuously updated, and build your agents on a foundation that includes the full organizational reality, not just the documented fraction of it. Humans focus on what they’re uniquely capable of: sensing new patterns, adapting to novel situations, making meaning, evolving the organizational model. Agents focus on what they’re good at: executing known patterns at speed and scale.

In the first model, the enterprise becomes brittle. Efficient, perhaps. Fast, certainly. But blind to its own drift and fragile in the face of change.

In the second model, the enterprise becomes coherent. Its systems and its humans are finally aligned, for the first time in the history of computing. Not because we eliminated the gap between documentation and reality, but because we finally made the reality visible and gave it equal standing with the documentation.

For fifty years, we built systems that were coherent with each other but not with us. We can do better now. The technology exists. The practitioners are still here, for now. The only question is whether we capture what they carry before they’re gone, or whether we let the most valuable organizational knowledge on the planet walk out the door while we celebrate our shiny new agents.

The building is on fire. The people who can put it out are inside. But they won’t be for long.

Chris Dollard is CEO and co-founder of ABRAXIS Inc., a company building organizational intelligence infrastructure for the agentic enterprise. He has spent 15+ years in enterprise transformation at IBM, Deloitte, Accenture, and MAKE Technologies. He writes about natural intelligence, artificial intelligence, and the space between them at chrisdollard.ca. You can reach Chris here.